With the advent of the internet of things, the amount of data being collected around the world is growing exponentially. We are collecting data with a current need in mind, we are collecting for future purposes and we are collecting it just to have it, in case we find a way to use it later.

In a smart building, occupant tracking data is switching on/off lighting and HVAC systems to reduce energy consumption. That data is stored to help artificial intelligence systems predict future use and flow around the facility. We collect and store all manner of data from every system around the building believing that it will one day help us answer a question we are yet to consider.

Each new data stream feeds into a data lake and data used remains stored, so this lake will grow and grow forever, it seems. Like a natural lake, this digital lake needs space to exist, and each year we hear about the latest, biggest data center in the world. The growing problem is that, it will become increasingly difficult to find, combine and use information in this endless sea of data we are creating.

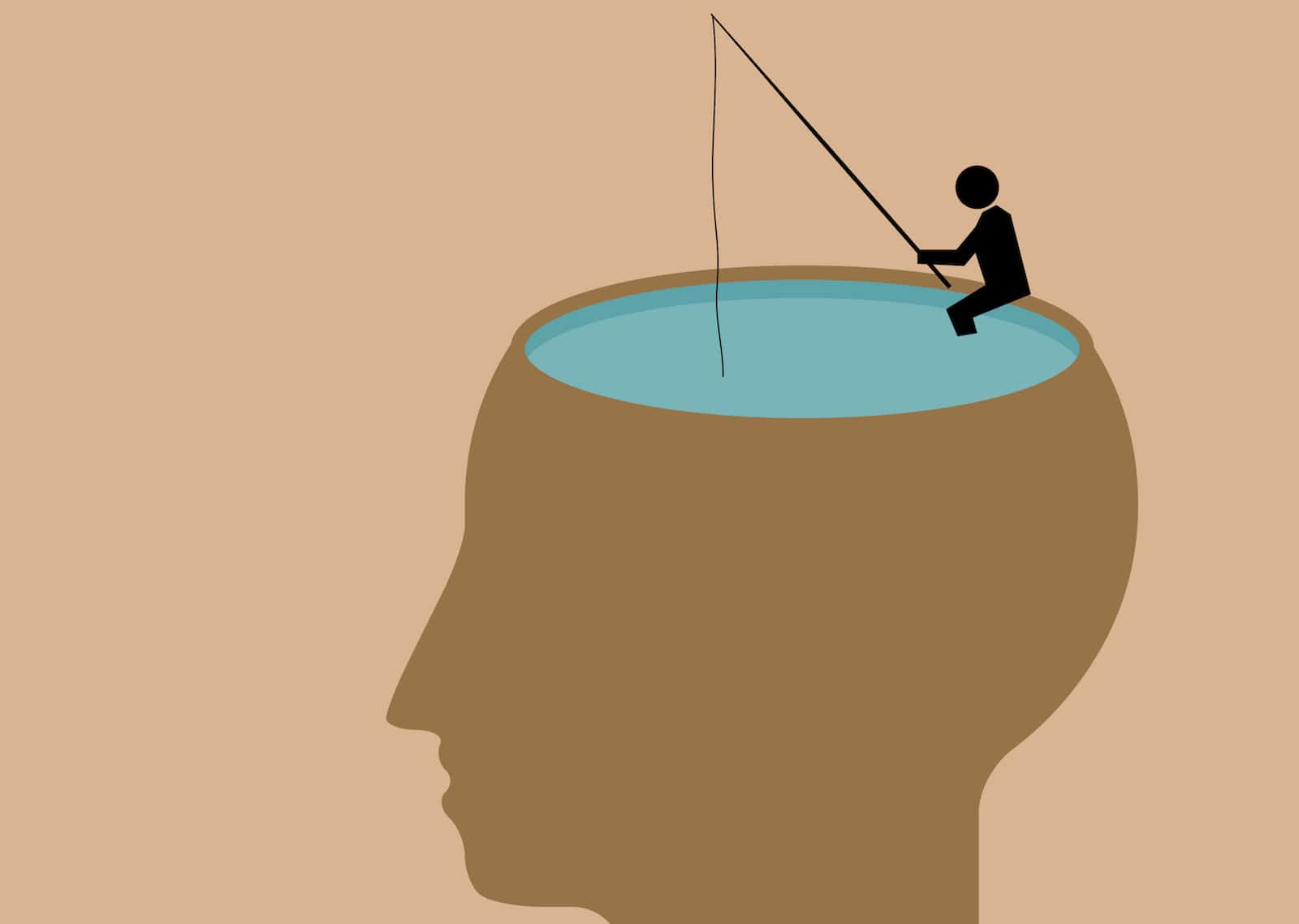

An important question has been rising to the surface; do we solve this problem by finding better ways to access this growing pool of data, or do we strive to limit the data entering this pool?

“You need a mechanism that can be controlled, governed as a process, to deliver exactly what you need into the data lake. And not just dump information in there,” says Chuck Yarbrough, the senior director of solutions marketing and management at Pentaho.

Without some smart management for the data going into the lake you’re going to end up with a “toxic dump,” suggests Yarbrough. However, we cannot currently envisage every use we may have for this data. So any smart management for the data going into the lake, will inevitably discard data that may well be valuable one day. Until it is valuable, however, it is costly, both in terms of physical storage and how much it disrupts the ability to find information when it is needed.

There is another problem too; data collected from plethora of smart building and IoT systems arrives in different volumes, varieties, velocities and veracities. Data must be kept in its raw form in order to be able to use it in all the unimagined ways it may one day be useful. This makes data storage less efficient and categorization across many data sets much more challenging.

It was Albert Einstein who said, “we can’t solve problems by using the same kind of thinking we used when we created them.” Having categorized, classified and tabulated data in sequential databases for many years, we realized that we had created a problem, when faced with multifaceted IoT data. The solution was NoSQL (Non Structured Query Language); non-sequential databases that are searchable and usable despite the diversity of data formats present.

While not as functional as SQL databases, NoSQL allows us to store all the raw IoT data we collect more efficiently and in a way that we can use. Dan Kogan, the director of product marketing at Tableau, says we are shifting toward “faster databases like Exasol, MemSQL and Kudu.” He was speaking specifically about cloud platforms, and new innovations like Amazon Athena, that are allowing us to turn a giant S3 “data lakes” into actionable analytics without investing new infrastructure or tools.

NoSQL solutions such as MongoDB and Redis grew significantly in popularity in 2016, spelling the end for SQL, or so we thought. Lloyd Tabb, the founder and chairman of analytics firm Looker, says that Google BigQuery is “essentially infinitely scalable and fully ANSI compliant,” suggesting it will reinvigorate the case for SQL.

“You can’t just plan your lake as a data repository. You also need to plan the toolage around it,” says Philip Russom, the senior research director of data management at TDWI.

Russom believes we should be relying on data integration tools and infrastructure to make that controlled, governed process possible. He highlights metadata management, and increased integration with other data warehouses. He supports the creation of metadata, as data is ingested, to enable more “on-the-fly” data modeling. Russom’s approach is not about ditching older data management processes, but instead refining them for each data lake’s specific characteristics.

Whichever way you look at it; SQL or No, keeping all data or some; we must accept that data lakes will continue to grow rapidly and endlessly. We probably also need to accept that some data will have to be discarded before entering the lake. We need to find a balance between these factors and we need to develop better ways to store and access multifaceted IoT data.

In 2017, we can expect more data owners around the smart building and IoT space to be facing the reality that the situation cannot continue along this path. They must get rid of some data, find a potential use for it, and or find a better way to store it, in order to justify the cost of keeping it.

It’s like fishing, but the final catch you want (the actionable intelligence) requires an unknown number of different types of fish from different parts of a rapidly growing lake, to make a perfect meal that you are yet to imagine. So how do you best manage your lake?

[contact-form-7 id="3204" title="memoori-newsletter"]