Since the emergence of the data age we have been taught the 4 Vs of good datasets: volume, velocity, variety, and veracity. These are the qualities that have given rise to the big data companies that have come to dominate the growing use of machine learning (ML) and artificial intelligence (AI). Those with the resources to enable the velocity, variety, and veracity of great volumes of data have been able to lead the data race with sheer problem-solving capacity. Recently, however, new approaches have emerged to disrupt the core principle of volume and give rise to a small data counter-revolution.

The first is transfer learning, which transfers elements of big data models pre-trained for other tasks to tackle a new problem where data may be more difficult, or impossible, to collect. Google’s open-source BERT model, for example, has built up over 340 million parameters, while OpenAI’s closed GPT-3 is orders of magnitude bigger with 175 billion parameters, each developed to tackle the problem of understanding natural human language. It turns out, however, that a small selection of that data can be very effective in training more specific language tasks through techniques like few-shot learning.

“Taking essentially the entire internet as its tangential domain, GPT-3 quickly becomes proficient at these novel tasks by building on a powerful foundation of knowledge, in the same way Albert Einstein wouldn’t need much practice to become a master at checkers,” says Jiang Chen, VP of Machine Learning, Moveworks. “And although GPT-3 is not open source, applying similar few-shot learning techniques will enable new ML use cases in the enterprise — ones for which training data is almost nonexistent.”

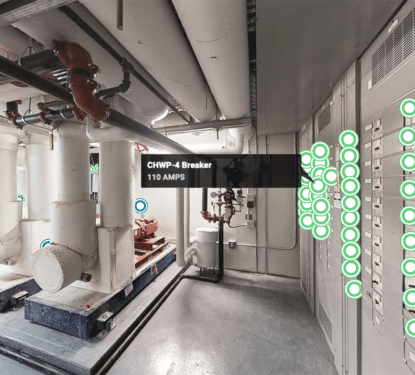

While big data is necessary to solve broad problems like natural language processing, small data models can gather just the parts of that big data they require for solving specific issues, such as building automation support, for example. Rather than learn how to understand all languages, small data systems can focus on understanding company and task-specific languages through few-shot, one-shot, and even zero-shot machine learning techniques.

“For example, if we have a problem of categorizing bird species from photos, some rare species of birds may lack enough pictures to be used in the training images. Consequently, if we have a classifier for bird images, with the insufficient amount of the dataset, we’ll treat it as a few-shot or low-shot machine learning problem,” explains Dr. Michael J. Garbade, Founder of Education Ecosystem. “If we have only one image of a bird, this would be a one-shot ML problem. In extreme cases, where we do not have every class label in the training, and we end up with 0 training samples in some categories, it would be a zero-shot ML problem.”

The ability of smaller companies to utilize large open-source datasets, like BERT, to train their ML systems to effectively tackle specific challenges, changes the whole data landscape. Where previously, only the companies with the resources to collect, store, and process huge volumes of data could create the most effective ML systems, now even small companies can create effective solutions, albeit to more specific problems. Small companies have small data but they are learning to gather and apply it better, and they are also learning to share.

Another approach disrupting the traditional data hierarchy is collective learning, where many smaller companies working on the same problem, combine their data through a third-party to develop their models beyond what they can do with their limited resources alone. More recently, the company and task-specific qualities of transfer learning models have been brought together with collective learning approaches, the resultant data is shining a new light on the patterns of language found for the same task across different companies, for example.

“The combination of transfer learning and collective learning, among other techniques, is quickly redrawing the limits of enterprise ML,” says Chen. “For example, pooling together multiple customers’ data can significantly improve the accuracy of models designed to understand the way their employees communicate. Well beyond understanding language, of course, we’re witnessing the emergence of a new kind of workplace — one powered by machine learning on small data.”

Small data does not replace big data, it simply provides a platform for smaller companies to better serve their customers with ML and AI services, thereby democratizing the data business landscape. However, with the evolution of small data through transfer learning, collective learning, and their combination, new insight is being created that big data may not have discovered alone. Now, big data companies will no doubt be investigating the potential of small data process to support a wide range of goals, and they have the resources to also rule small data in a big way.